|

11/13/2021 0 Comments Cobol Read File

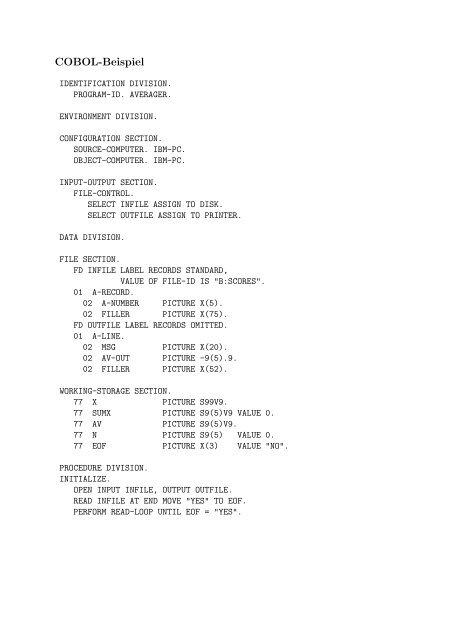

Select out -file assign to disk organization is line sequential. Select in -file assign to disk organization is line sequential. One for each type.Cobrix - COBOL Data Source for Apache SparkCode for PROGRAM FOR READ DATA FROM INPUT-FILE AND MOVE INTO OUTPUT-FILE in Cobol. A detail line and a final total line - there are therefore four 01 levels. This program generates multiple line types - there is a report heading line.The screencasts are available here:DataWorks Summit 2019 (General Cobrix workflow for hierarchical databases): Spark Summit 2019 (More detailed overview of performance optimizations): Requirements spark-cobolYou can link against this library in your program at the following coordinates: Scala 2.11$SPARK_HOME/bin/spark-shell -packages za.co.absa.cobrix:spark-cobol_2.12:2.4.2This repository contains several standalone example applications in examples/spark-cobol-app directory.It is a Maven project that contains several examples: Unchecked unions and variable-size arrays)Supports nested structures and arrays (including "flattened" nested names)Supports HDFS as well as local file systemsThe COBOL copybooks parser doesn't have a Spark dependency and can be reused for integrating into other data processing enginesWe have presented Cobrix at DataWorks Summit 2019 and Spark Summit 2019 conferences. MotivationAmong the motivations for this project, it is possible to highlight:Lack of expertise in the Cobol ecosystem, which makes it hard to integrate mainframes into data engineering strategiesLack of support from the open-source community to initiatives in this fieldThe overwhelming majority (if not all) of tools to cope with this domain are proprietarySeveral institutions struggle daily to maintain their legacy mainframes, which prevents them from evolving to more modern approaches to data managementMainframe data can only take part in data science activities through very expensive investmentsSupports primitive types (although some are "Cobol compiler specific")Supports REDEFINES, OCCURS and DEPENDING ON fields (e.g. INTO ws-data-record : Indicates the record read from file.Add mainframe as a source to your data engineering strategy. Seamlessly query your COBOL/EBCDIC binary files as Spark Dataframes and streams.You must code NEXT RECORD phrase to retrieve records sequentially from files in dynamic access mode.In this example RDH header is 5 bytes instead of 4 This application reads a variableRecord length file having non-standard RDW headers. SparkCodecApp is an example usage of a custom record header parser. SparkCobolApp is an example of a Spark Job for handling multisegment variable record The copybookContains examples of various numeric data types Cobrix supports.

Cobol Read File How To Run TheReading Cobol binary files from HDFS/local and querying themStart a sqlContext.read operation specifying za.co.absa.cobrix.spark.cobol.source as the formatInform the path to the copybook describing the files through. It can be used to runJobs via spark-submit or spark-shell. Refer to README.mdOf that project for the detailed guide how to run the examples locally and on a cluster.When running mvn clean package in examples/spark-cobol-app an uber jar will be created. For example, youCan specify a local file like this. You can specify that a copybook is located in the local file system by adding file:// prefix. By default the copybookIs expected to be in HDFS. Cobol Read File Full Version Can BeIn that case you can use the full path to the source class instead. Load("path_to_directory_containing_the_binary_files")Inform the query you would like to run on the Cobol DataframeBelow is an example whose full version can be found at za.co.absa.cobrix.spark.cobol.examples.SampleApp and za.co.absa.cobrix.spark.cobol.examples.CobolSparkExampleVal sparkBuilder = SparkSession.builder().appName( "Example ").option( "copybook ", "data/test1_copybook.cob ").filter( "RECORD.ID % 2 = 0 ") // filter the even values of the nested field 'RECORD_LENGTH'In some scenarios Spark is unable to find "cobol" data source by it's short name. Option("copybook_contents", ".copybook contents.").Inform the path to the HDFS directory containing the files. Alternatively, instead of providing a pathTo a copybook file you can provide the contents of the copybook itself by using. Below is an example whose full version can be found at za.co.absa.cobrix.spark.cobol.examples.StreamingExample.config( "copybook ", "path_to_the_copybook ").config( "path ", "path_to_source_directory ") // could be both, local or HDFSVal streamingContext = new StreamingContext(spark.sparkContext, Seconds( 3))Import za. Stream.filter("some_filter"). StreamingContext.cobolStream()Apply queries on the stream. You can load ASCII files as well by specifying the following option:If the input file is a text file (CRLF / LF are used to split records), useMultisegment ASCII text files are supported using this option:Read more on record formats at Streaming Cobol binary files from a directoryImport the binary files/stream conversion manager: za.co.absa.spark.cobol.source.streaming.CobolStreamer._Read the binary files contained in the path informed in the creation of the SparkSession as a stream. Untitled goose game multiplayerA better approach would be to create a jar file that containsAll required dependencies (an uber jar aka fat jar).Creating an uber jar for Cobrix is very easy. For SparkR, use setLogLevel(newLevel).Spark context available as 'sc' (master = yarn, app id = application_1535701365011_2721).Using Scala version 2.11.8 (OpenJDK 64-Bit Server VM, Java 1.8.0_171)Type in expressions to have them evaluated.Scala> val df = spark.read.format("cobol").option("copybook", "/data/example1/test3_copybook.cob").load("/data/example1/data")Df: org.apache.spark.sql.DataFrame = +-+Gathering all dependencies manually maybe a tiresome task. Here is an example:$ spark-shell -packages za.co.absa.cobrix:spark-cobol_2.12:2.4.2$ spark-shell -master yarn -deploy-mode client -driver-cores 4 -driver-memory 4G -jars spark-cobol_2.12-2.4.2.jar,cobol-parser_2.12-2.4.2.jar,scodec-core_2.12-1.10.3.jar,scodec-bits_2.12-1.1.4.jar,antlr4-runtime-4.7.2.jarTo adjust logging level use sc.setLogLevel(newLevel). Getting all Cobrix dependenciesCobrix's spark-cobol data source depends on the COBOL parser that is a part of Cobrix itself and on scodec librariesAfter that you can specify these jars in spark-shell command line. Then, the indexes are distributed across the cluster, which allows for parallel variable-lengthHowever effective, this strategy may also suffer from excessive shuffling, since indexes may be sent to executors far from the actual data. The first stage traverses the records retrieving their lengthsAnd offsets into structures called indexes.

0 Comments

Leave a Reply. |

AuthorZhang ArchivesCategories |

RSS Feed

RSS Feed